We are in an ongoing war battling for search results. We face Google constantly changing their search algorithm and unethical SEOs who are constantly trying to exploit the algorithm.

SEO today is a complicated process, all thanks to Google’s complex search algorithm. Before this complex algorithm existed, there were many ways to exploit the simpler algorithms and get the top spot in search results.

Popular Unethical SEO Methods

Unethical SEO is also known as blackhat SEO, and its purpose was to exploit Google’s search algorithms to gain rankings quickly. You don’t want to be on this side though, as the punishments are severe once caught. Your site would be gone from the top 100 search results.

Here are some of those methods that exploited Google’s search algorithms.

Unethical Link Building:

In its early stages, Google didn’t care where your links were coming from. As long as domains were high in page rank, do-follow, and had anchor text you were targeting. It almost seemed like Google made a direct correlation with quantity and quality.

Link Exchanges

Site owners would exchange backlinks with each other, usually within the same niche and industry, to keep the links relevant. You may think that this is a legit way to build out your link profile, but to Google’s eyes, it isn’t. Google favours natural link building, and exchanging links is considered a link scheme and violates their Webmaster Guidelines.

Guest Blog Link Building

Guest posts are actually a great way to build links to your site if done right. However, the blackhat way of doing guest blog link building is providing low quality or spun articles (more on article spinning later). Inexperienced bloggers would see this as an opportunity to build out content for their site, but it would only hurt their blog as it would be flagged as duplicate or low-quality content.

Tiered Link Building

Having a huge number of backlinks pointing directly to your site was one of Google’s largest factors when it came to ranking. Still, with algorithm updates, Google knew which backlinks were spammy and penalized your site if it contained any.

Blackhatters then came up with tiered link building, which was a layer of protection for their main site. Most people had up to 3 layers of protection, which took a lot of work but with automation tools, made the job a lot easier.

The first layer of protection (Tier 1’s) would give backlinks to your main site. These Tier 1 sites would usually come from Web 2.0s (Weebly, Tumblr, WordPress, Blogger, Blogspot, etc…), expired domains, and more.

Because these sites pointed directly to the main site, the links had to appear natural, and the content had to avoid duplicate content penalties and be readable.

However, in order for Tier 1 links to give your site some ranking juice, they needed backlinks, which is where Tier 2 backlinks.

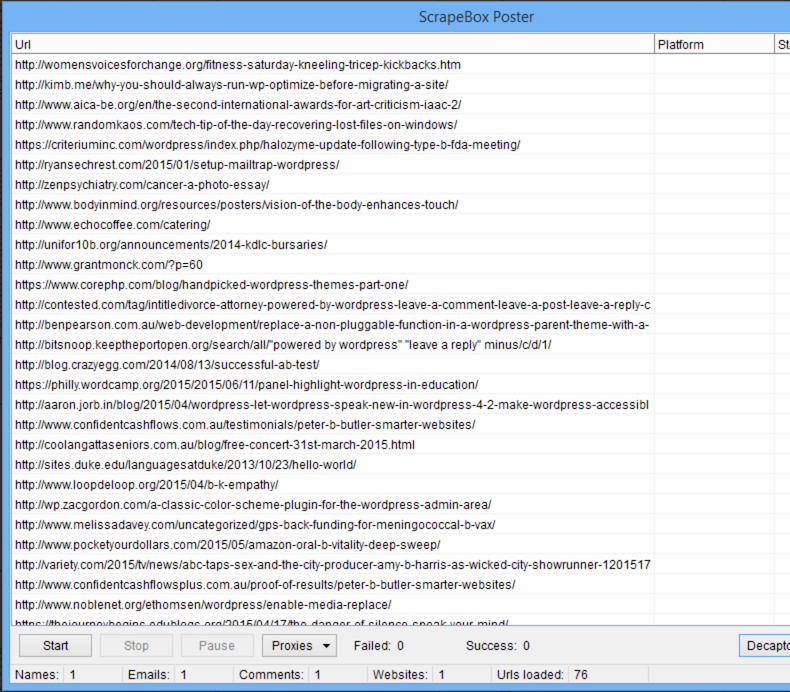

Automation Tools:

If you were to build thousands of backlinks in a short period of time, it would be extremely time-consuming. Blackhatters, however, were able to generate tools just for this. Here are a couple of examples of those tools:

GSA SER (Search Engine Ranker) & Scrapebox

Random blog comments are everywhere, and your site has probably fallen victim to them. The majority of those comments aren’t actual people leaving them; it’s their bots.

GSA SER and Scrapebox were tools used to find sites that are in your niche, and drop your links with the anchor texts you want to those sites. Forums, blog comments, and Web 2.0s were the main targets. For sites that required registration, they could even automatically create the account in order to post on the site.

Chimp Rewriter

Writing content takes tons of time. If you were to create thousands of links through guest blog posts, that is a ton of writing.

Then comes the option of “spinning” an article. This means that you can automatically rewrite the same content but appear as unique content to Google.

Let’s look at this example: {This is|Look} {a sentence|a post|content}.

It would put out either, “This is a sentence”, “This is a post”, “This is content”, “Look a sentence”, “Look a post” or “Look content.”

With the use of AI, Chimp Rewriter was able to automatically create the spintax from the article you provided and come up with brand new complex sentences with different phrases.

This, however, wasn’t able to create a fully readable sentence in a human’s eyes. At the time, all Google cared about was the content being unique. However, this has since changed as Google uses readability as a factor for ranking.

PBNs

Private Blog Networks (PBNs) are pretty similar to tiered link-building. This became a popular method and is still alive today because of how powerful it was.

Your PBN would, in theory, be your Tier 1s, and you basically would be running your own blog network and creating content for Tier 1 each site, which is a lot of work.

To combat the workload, people would either pay for articles or use Chimp Rewriter.

Hidden Anchor Text

The number of times your targeted keyword showed up on your page used to be a huge factor for Google. This is similar to keyword stuffing, but the difference is that these words are hidden.

A wall of text containing all the keywords would usually be placed at the bottom of the page, with the font being the same color as the background. This kept pages looking clean to the human eye but completely visible to Google.

Web 2.0s

Web 2.0’s were highly popular in the early 2010s. Sites like Weebly, Blogger, Live Journal, Diigo, AngelFire, etc. were popular targets for black hat SEOs due to their high domain authority.

The best part? Signing up for these platforms were free, and the majority of these accounts were do-follow links. Google at the time, also gave favour to links from Web 2.0s.

Automation tools were able to create bulk accounts and post content to these Web 2.0s.

A Brief History of Google Algorithm Updates

You may not be too familiar with these names, but here’s a quick history lesson on the early algorithm updates of Google.

Florida

This was the first major algorithm update by Google that launched a new era of SEO. In this era, invisible anchor texts, invisible links, and keyword stuffing was the biggest way to get ranked. The Florida algorithm update launched in November 2003. Websites using the spammy techniques the update sought to crack down on were wiped out before the holiday season.

Jagger

2005 was a year where link-building played a big part in SEO. Many sites were taking advantage of this by building spammy and unnatural links to their websites. Jagger was released in 3 stages from September to November that targeted backlink spammers the most.

People who were using technical tricks (such as using CSS to hide text to users but remained visible to crawlers) also took the hit pretty badly.

Big Daddy

This update was a major update that didn’t necessarily target black hat SEO. Big Daddy was meant more to improve the quality of search results. It was an infrastructure update that focused on URL canonicalization (Specify which URL to show in search results), Inurl: Search Operator (Finding pages with a certain word in the URL), and 301 & 302 Redirects.

This brought up the importance of technical SEO, which even today plays a major factor. Any broken links or broken pages can have a negative effect on a page’s rankings.

Vince

Vince was a quick update released in January of 2009, but it was an update that made big brands ‘win’. It gave favour to big brands by pushing them to the first page results. In this era, search results were filled with affiliate sites and black hat spammers that took over the first page. One of the major reasons for this update was because Google valued trust, quality, and relevance, which is what big brands brought to the table.

This update emphasized the importance of having authority in a certain industry.

Google Updates that Killed These Methods

When it comes to the war with Google and black hatters, the terms panda, penguin, and hummingbird are common to hear.

Panda

As a push for quality, Google released its first major update to reward high-quality websites. Here are some metrics Google would use against your site’s quality.

Low-Quality Content

People are looking for answers, and if your site is unable to do that, Google won’t see your site as an authority in the industry, so why would it show your website to people looking for answers?

If you notice high-ranking blog posts on Google, they’re usually thousands of words long because they aim to answer questions thoroughly.

Duplicate Content

Stealing content was a common practice among black hatters. High-quality websites put out unique content. Panda gave Google an idea of which sites were copying the content, and gave them the ability to devalue these sites in search results.

Low-Quality Guest Posts

Submitting guest posts is a great way to get high-quality links, but rejection can happen. It sucks, but don’t take it personally. Google devalues sites with low-quality content. If your guest blog post is low quality, it could negatively affect someone else’s site. All they’re doing is protecting themselves!

Ad Ratio

This is a huge red flag for a spammy looking site. It also uncovers the main intent of the website, which is to make money rather than provide value.

Penguin

The Penguin algorithm was needed by Google to continue to fight black hatters, and this is the update that had a significant impact on them.

Having a proper link profile was one of the biggest factors that determined whether a site was ranked through black hat techniques or not. Google took a look at where your links came from, the frequencies of exact phrase anchor texts, how often your links were coming in, how fast they came in, etc…

As a result of this, a ton of black hatters got wiped from search results overnight.

Hummingbird

Hummingbird was a significant update that Google implemented that shifted the focus from combatting black hatters to focusing on users. This gave websites more opportunities to rank higher.

Semantic Search:

Instead of focusing on exact match keywords, Google learns the ‘natural language’ of the user and looks for related keywords.

Conversational Search:

This was the core of the Hummingbird update. Search terms like “where is the nearest ice cream shop”, “how do I make more money”, “what time does the gym close”, etc. The purpose of this was to keep things more natural. This was a push for website owners to stop trying to game the algorithm and put more focus on writing content for people, not robots.

Fred

This is the latest of the current big algorithm updates from Google. It targets blackhat sites that focus heavily on monetization. Sites that focus solely on monetization usually don’t focus on user experience, which is what Google is trying to push.

Sites affected by this update have seen up to a 90% drop in rankings, and here are some of the key factors that caused it.

Misleading ads

Google has gotten so much smarter over the years and can tell if two things are related or not. This means it can tell if an ad on your site is misleading or not. Misleading ads lead to negative customer experiences.

This included ads that tricked visitors to click on them. Notice how you don’t see a lot of “Download Now” sites that often anymore? They got punished. Gone are the days of trying to download a specific file from a site, clicking the download button only to be redirected to some form or survey you have to fill out.

Saturation

Ads on a site are fine. But there is a line, and if you cross it, Google can punish you for it. Things like excessive ads on a page or tons of affiliate links on your page can put your site on Google’s bad side. You don’t want that.

Unrelated Content

Google favours authority sites. If you have a site with tons of articles, but the topics aren’t related to each other, then that site will most likely lose its rankings. Google sees broad topic sites as sites that are trying to rank for any keyword out there for the sake of getting traffic. This, in practice, isn’t a good idea anyway since a targeted audience won’t be defined.

Mobile Unfriendly

Everyone Google’s on their phone. If your site isn’t set up for mobile or isn’t responsive, then it will affect your rankings. User experience plays a factor for SEO, so if your site is hard to navigate and look at in your phone’s browser, say goodbye to your rankings.

Google’s Algorithm in 2019

Content is king. If you want your site to be safe from algorithm updates, your best bet is to keep things natural and continue to provide value, good content, and positive user experience. Keep your blog topics relevant, and don’t worry about stuffing keywords. Remember, readability and user experience play a factor in your rankings. And on top of that, make sure your technical SEO is up to par!